The item inside every digital camera that adds at least 50% to the price is the converter that transforms radiations of light into binary digits. You can think of it as digital film, yet it is more akin to a scanner than to a roll of silver halide encoated plastic.

Not many converters yet exist. The current converter du jour is the Charge-Coupled Device (CCD), which was invented by Bell Labs, and first came to prominence in astronomic imaging, later hitting it big in camcorders. An alternative known as CMOS exists, but its quality as found in digital cameras is currently on the low end. The CMD system lurks on the horizon, but will be some time before it is ready for prime time.

Where History Is Forged

CCDs actually had their start in another idea: magnetic bubble memory (MBM). Both gushed from that veritable font of fecundity--Bell Labs--in the late 1960s: MBM in 1966, and the CCD in 1969. The research at the time at Bell Labs was not even heading towards imaging systems; instead they were aiming for new technologies for computer memory. Bell Labs nailed it with MBM and CCDs, and in fact incorporated them into products; unfortunately, they were not long viable in competition with dynamic random access memory. The concept behind magnetic bubble memory--manipulating energies to store bits of information--intrigued researchers working with silicon devices. Back in 1969, Willard Boyle and George Smith "started batting ideas around," according to Smith, "and invented charge-coupled devices in an hour. Yes, it was unusual--like a light bulb going on." Though abandoned as an idea for memory, their device led to the invention of the first solid-state television camera in 1970, and the design in 1975 of the first CCD television camera that met resolution requirements for commercial use.

The first use of CCDs in a still camera was in the astronomic observatory. Tony Tyson, also of Bell Labs, has been foraging for dark matter in the Universe for years. His research work in the refinement of CCDs led to the first CCD camera installation in 1979--at Lowell Observatory in Flagstaff, Arizona. Today, virtually all optical observatories use CCDs.

CCDs have since filtered down into camcorders, replacing the smeary and fragile pickup tubes; scanners; and even barcode readers, where CCDs have an advantage because they can "see" far more of the code than the one-dimensional laser, and can even read codes that wrap around cylinders--something laser readers don't do well. And, of course, the digital camera.

How Things Work

Light is energy. Light is radiation. As Einstein showed, one way to approach the study of light is to say that light releases packets of energy called quanta, which are proportional to the frequency of radiation they represent. In other words, light that represents the color red, which is a lower frequency of radiation than, say, the light that represents violet, correspondingly produces quanta of lower energy than the quanta of violet light. These quanta are also called photons.

Photoelectric cells (like in solar paneling) can essentially capture these quanta and send them down wires as electrons (the principal carrier of electricity). This is the first stage in a CCD digital camera: the snaring of light by photoelectric cells, and the transference of the energy down wires to the actual CCD.

A CCD is a collection of specialized interconnected capacitors called metal insulator capacitors. The capacitors can be strung out in a line, or form a rectangular array (scanners and faxes use the line approach; video and cameras use the rectangular array). Each capacitor creates a well that can store electrons. If too many electrons are stored in a well, they can spill over into surrounding electron wells (which is why it looks particularly bad when a digital image is overexposed). When the capacitors are finished collecting, they are then emptied one by one and expressed as a voltage.

In a digital camera CCD, this analog signal is then converted to a binary stream by an analog-digital converter, and is then sent down the line to whatever digital storage device is being employed (like RAM). In nondigital devices, the signal is left in its analog form. In NTSC camcorders, for example, the voltages are smoothed out into a luminance signal, which is then combined with sync signals to form NTSC video.

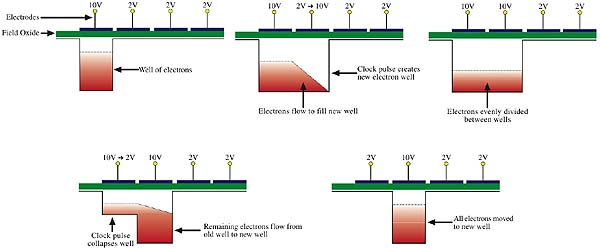

One interesting thing about a CCD is the way by which its contents are read. Not all the capacitors are read at once; instead they are sequentially read one by one, their contents zapped down the line, and then the receiving device (such as a computer) stores a representation and remembers from which cell each signal came from. As there is only one exiting line, the electrons must be passed from well to well and then be read serially. It's this passing of electrons that makes the charge-coupled device truly a unique item.

To pull it off, "clock" pulses of voltage are sent across the diodes. The voltage creates a well under an empty capacitor accessible to the electrons in the neighboring well. The electrons of that neighboring well then flows to fill both wells. That neighboring well the electrons came from then collapses, forcing its remaining electrons to go to the new well. In this manner all of the electrons are transferred from well to well.

When the last row is empty, a different clock pulse fires at a perpendicular angle, and the next row of electrons hops at once to the vacant row. This process continues until all of the rows have hopped, leaving the first row empty. Then the previous pulse returns, and the now full last row starts emptying cell by cell. Eventually the entire CCD will be empty, and will then be ready for usage again.

The speed by which the CCD can divest itself of its electrons is the refresh rate by which a digital camera user has to wait to take his next picture.

When Colors Attack

We have now seen how a CCD can be used to perceive and record the presence of radiation at the frequency band of light. However, this does not yet explain how to distinguish and record gradations of that band. In other words, we so far have only learned how to see in black and white and not yet in color. Using one or more CCDs to successfully record color adds layers of complexity to the CCD digital camera model we have been using. It also doesn't help that there are several schemes to go about it. But persevere--it'll be good for you.

Have you ever heard that white light is the mixture of all colors, and black is the absence of all colors? There are actually three components to white light: red, green, and blue light. Each of these colors has a frequency associated with it, and every visible color is created by mixing these three primary colors (this is why your computer monitor is called an "RGB" monitor: it emits varying degrees of each of these three colors). In that way white light becomes the summation of all colors. Similarly, if none of these components are present, one's retina has no light radiation hitting it, so the retina detects no color and interprets this phenomenon as black. In that way black "light" is the absence of all colors.

Hence, all color recording schemes for CCD based digital cameras (actually, all cameras period) revolve around how to differentiate between, capture, and register those three components of white light: red, green, and blue. The method used in any particular camera depends on price, speed, function, and resolution.

Since the photoelectric effect results in the same frequency of radiation as is applied to it from light, the frequencies of color would also be preserved. Therefore, individual CCD cells can be "attuned" to respond to different colors, depending on the degree to which each color is received by the photocell.

At the outset, CCDs are more responsive to low frequency light (red) than to high (blue), and are in fact receptive to infrared frequencies. Contrast this with the human eye, which is most receptive to green light--frequencies in the middle of the light band. Filters are used before the CCD stage to either beef up or knock out certain light frequencies, or to allow a complete infrared system. Lastly, temperature fluctuations cause a phenomenon known as "dark current" to occur, creating random noise in the CCD. One way to account for this is to keep the CCD really cold (which is what astronomic observatories do), or just to isolate it from variances in clime.

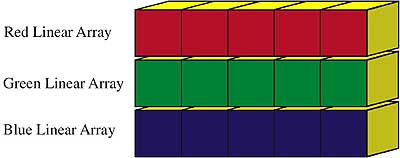

Colored imaging routines differ primarily by how many exposures are required and how the CCD is utilized. In a linear scanning CCD (again, such as with a scanner or a fax), there is only one method--a trilinear array. There are three rows of CCD cells, each row corresponding to a different color. At each stop of the array, the image is captured by the CCD, and then recorded onto one's storage medium. The three rows do not take a picture of the same row--each row records the image at its corresponding row. When the array moves one row forward, the rows image a one-row displacement.

As long as the object being imaged remains rock steady--such as items on a scanner bed, or a fruit still life--a trilinear array can attain much higher levels of resolution than can a matrix array. They're also cheaper to build, because their production failure rate is considerably lower than matrices.

To capture moving objects, such as squealing children, a CCD matrix must be employed, and a much more clever color scheme must be devised. Possible methods include one exposure with three matrices, one exposure with one matrix, three exposures with one matrix, and multiple exposures with a single matrix.

The simplest method on the one shot side of the fence is the "one shot triple matrix." This means there are three matrices, each receptive to a different color (red, green, or blue). The white light composite image enters the lens, is divvied up by a prism into those three colors, and then is fed to the corresponding array. The light can also be split by semi-reflective mirrors in lieu of prisms.

The next way camera makers can pull it off with one exposure is with a one shot, single array setup. Each CCD cell is attuned to one of the three colors, though they are predominately green, since that is the color the human eyeball is most sensitive to. The problem with this is that four CCD cells represent one pixel, so the gaps have to be interpolated. As you can see, a color CCD array employing this scheme has only one fourth the resolution of a monochrome array. High resolution shots are still possible, however: it just requires more capacitors.

The next two systems require the camera to take more than one exposure; therefore they require a very stationary "target," unlike the one shotters, which can image moving objects. The first and simplest is to take three different pictures, each with a different colored filter: red, green, or blue. All CCD elements read the red information, dump it, then read the green information (after the filter changes), dump it, then lastly read the blue information. Problems with this system can include color imbalance caused by light variations between exposures, and color fringes on object edges caused by filter misalignment.

Another method is to actually attune different parts of the photocell (not CCD cell) to different frequencies of light. That way, the number of cells (photo and CCD) remain the same, and there are no gaps in color information between CCD cells. The camera takes a number of pictures, each time hitting the photocells with the image beam at a different place. After each shot the CCD is read, reset, and the process begins again until all detail has been recorded. The only way this method can be accomplished is by using high-resolution lenses and piezoelectric crystals that can shift the light beam by as little as one micron (10-6 m).

Who Watches the Watchmen?

The digital retina by which we view the world improves every day as technological advancements progress. Today it is the CCD; tommorrow may be an improved CMOS. Yet our own fleshy retinas remain unchanged over the span of our life. We are bystanders amidst digital evolution, where technology flops and gasps upon the steel beach, then summarily hyperdrives through generational iterations right before our very eyes. Yet it seems the vast majority of technology evolves to ape their creators--technology forged in the image of humanity. It will be interesting to see when the engineering begins to go the other way--humanity forging itself into the digital image of its technology, by using what it has learned through creating that technology. Thus we enter a cybernetic feedback loop. As captured in the eye of the CCD.